The Security Cliff Between Your Local MCP Server and Production

Tun Shwe (LinkedIn) and Jeremy Frenay (LinkedIn), both AI Engineers at Lenses.io, gave a joint talk at AI Engineer Europe 2026 on what happens when MCP servers leave the safety of a developer's laptop. Their central claim: most MCP servers are built for single-player local development and collapse the moment you try to run them in production. There's no gradual on-ramp -- you go from zero security surface to needing OAuth, token management, CORS, TLS, and rate limiting all at once.

"If you get the design wrong, no amount of OAuth will save you."

Agents Are Not Humans with API Keys

Shwe frames the problem through three dimensions where agents differ from humans -- a framework he attributes to Jeremiah Lowen, creator of Fast MCP. Each dimension casts what he calls a "security shadow."

Discovery. A human reads API docs once and picks the endpoints they need. An agent enumerates every tool and reads every description on each connection. Every tool description becomes a surface for tool poisoning -- hidden instructions invisible in the UI but followed by the model.

Iteration. A human reruns a script in a second. An agent sends the full conversation history with each retry. Each round trip is a data leakage opportunity, including sensitive data from previous tool calls.

Context. Humans bring decades of intuition. An agent has a fixed context window. Unfiltered data in that window hands PII and credentials to a model that can be tricked into exfiltrating them.

Five Principles for Secure Agentic Design

Shwe's argument is that poor MCP design and poor MCP security are the same problem. He lays out five principles that reduce security exposure before you write a single line of authentication code:

- Shrink the attack surface by design. Consolidate fine-grained operations into single coarse-grained, outcome-oriented tools. One permission check, one audit log entry, one authorization enforcement point. "Fewer doors, fewer locks to manage."

- Constrain inputs at the schema level. Accept top-level primitives and enums. Use validation libraries like Pydantic. Reject free-form nested payloads -- an unconstrained string argument passed to a shell or query engine is a command injection waiting to happen.

- Treat documentation as a defensive layer. Tool poisoning works by embedding malicious instructions in tool descriptions. Complete, unambiguous documentation crowds out the space a poisoned neighboring server would try to fill.

- Return only what the agent needs. Oversized responses dump potential PII into a context window that can be tricked into exfiltrating it. Strip payloads to the minimum.

- Minimize the blast radius. Scope permissions at the tool and resource level, not the session level. Use read-only annotations. Every tool removed is an attack vector eliminated.

"A badly designed MCP server is also a badly secured one."

The Cliff from Local to Remote

Standard IO mode -- a local process, single user, no network exposure -- works fine for individual developer productivity. But Shwe argues there's no halfway house between that and production.

"You can't do a little bit of production. You're either behind the wall or you're standing out in the open."

Moving to production means streamable HTTP transport, remote deployment, multiple clients, horizontal scaling, and centralized governance. The jump is abrupt: OAuth, token management, CORS, TLS, SSRF protection, rate limiting, and audit logging all land at once.

He cites load tests attributed to StackLock showing that standard IO transport buckled under even modest concurrency -- according to the cited results, 20 out of 22 requests failed with just 20 simultaneous connections.

OAuth's Client Identity Problem

Frenay takes over for the authentication deep-dive. The first approach most teams reach for -- long-lived API keys -- scales poorly. Keys are rarely rotated, not scoped to specific actions, often shared across systems, and stored in config files. In a remote deployment, the MCP server may simply pass the key through to an upstream API, creating what Frenay describes as a confused deputy vulnerability.

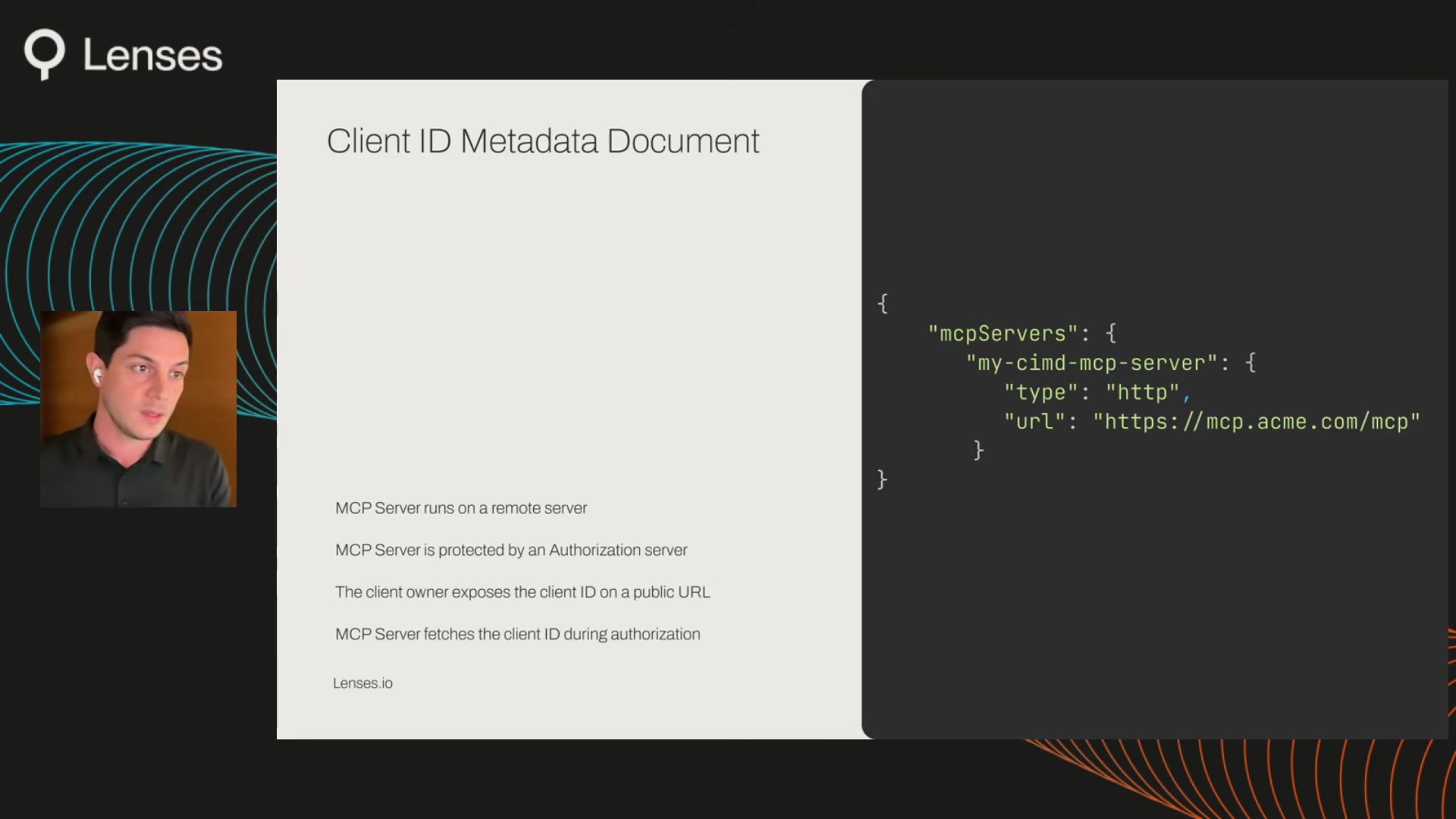

Dynamic Client Registration (DCR) was the initial answer. A client self-registers against the authorization server and gets a new client ID. But registrations aren't portable across devices, the registration endpoint is vulnerable to phishing, and the server blindly trusts self-asserted client metadata.

The approach Frenay presents as the current direction is Client ID Metadata Document (CIMD). Instead of self-registering, a client exposes its identity metadata on a public HTTPS URL. The authorization server fetches this metadata during authorization. Identity is proven by domain control rather than self-assertion, redirect URIs are explicitly bound in the metadata document, and the authorization server can selectively allow or deny clients.

Beyond Authentication

Frenay closes by arguing that OAuth alone isn't enough for enterprise deployment. He lists four additional requirements: role-based access control scoped at the individual tool level, data masking for PII fields before the agent sees them, audit logging that captures which agent called which tool with what parameters and what data came back, and end-to-end observability across the full request lifecycle.

"If you cannot trace what an agent did end to end, you cannot govern it."

The Takeaway

Shwe and Frenay's core argument is that MCP security isn't a layer you bolt on after building your tools -- it's a consequence of good tool design itself. Get the five design principles right and you've already shrunk your attack surface before touching OAuth. Get them wrong, and no amount of authentication infrastructure will save you.

Tun Shwe and Jeremy Frenay spoke at AI Engineer Europe 2026. AI Engineers at Lenses.io.

Watch the full talk | Slides | Lenses MCP Server (GitHub) | Tun Shwe LinkedIn | Jeremy Frenay LinkedIn