AI Agents Can't Walk Upstairs and Ask for Help

Juan Herreros Elorza (LinkedIn, GitHub), Team Lead on the Cloud Native Technology team at Banking Circle, makes a deceptively simple argument: the platform engineering practices that have always been "best practices" are now prerequisites. Not because they've changed, but because AI coding agents have become first-class users of internal developer platforms -- and agents can't compensate for the gaps that humans have been working around for years.

"If this situation was tricky for a developer, this situation is essentially impossible for a machine, because the machine is not going to go and try the pipeline and then go up to the second floor and talk to the person in that other team."

The New Developer Who Can't Improvise

Juan opens with a story anyone in a large engineering org will recognize. A new developer joins, writes their application, and hits the deployment wall. They copy a CI pipeline from a teammate. They chase down someone on the infrastructure team for a database. They wait days. Eventually, through Slack messages, hallway conversations, and borrowed tribal knowledge, they get their service running.

Humans muddle through this. Agents cannot. An agent can't wander over to the infrastructure team's desk. It can't read the room to figure out which Slack channel has the person who knows the answer. Every place where your platform relies on implicit knowledge or human intervention is a place where an agent hits a dead end.

He frames this not as doom but as opportunity. Organizations that have struggled to get buy-in for platform improvements now have executive attention on AI. That attention can fund the work that platform teams have been advocating for all along.

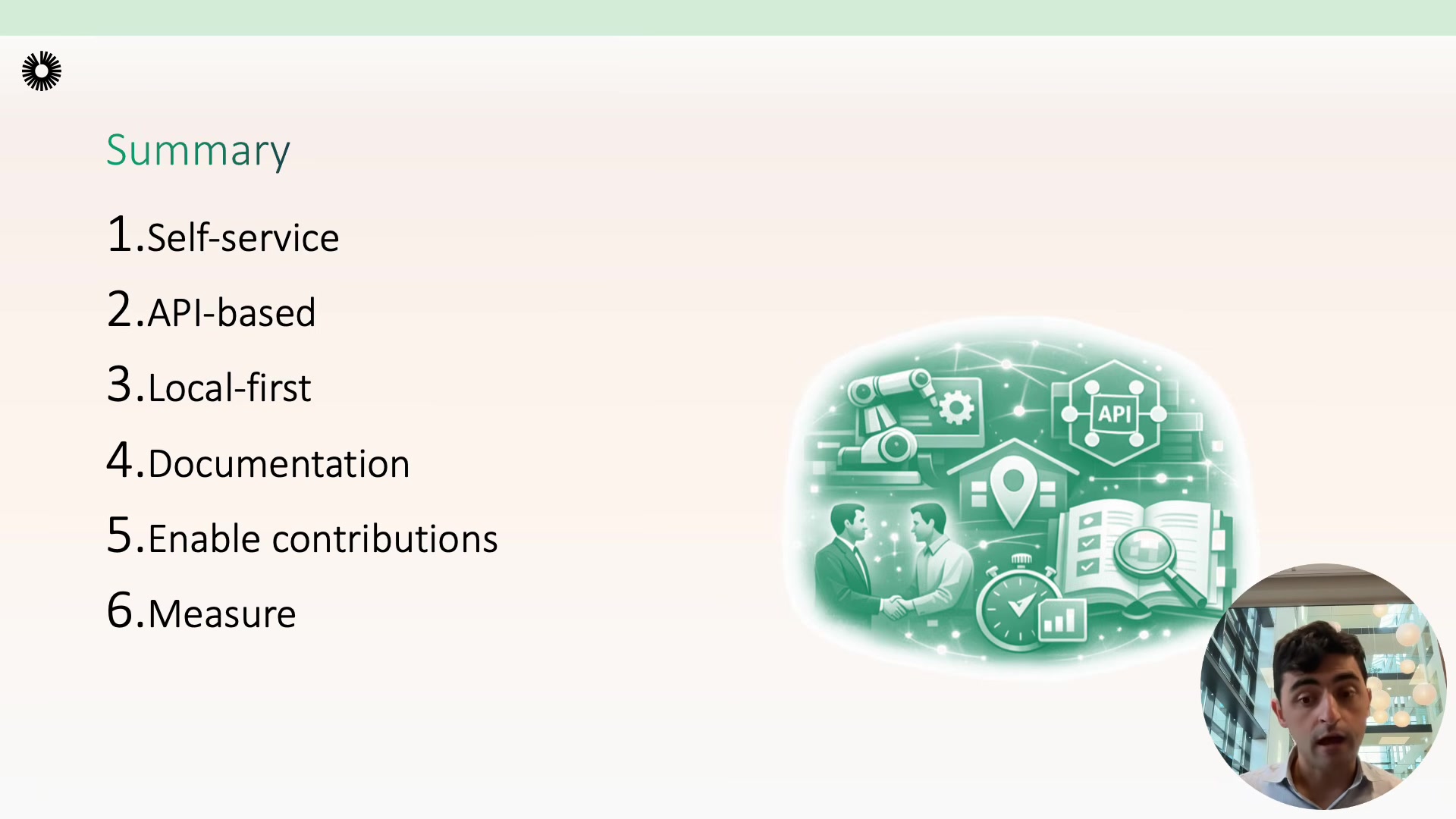

Six Principles for Agent-Ready Platforms

Drawing from his experience building an internal developer platform at a fintech, Herreros Elorza lays out six principles. None of them are new -- that's his point.

1. Self-service. Remove humans from all provisioning and deployment paths. If a developer or agent needs a resource, they should get it without filing a request or waiting on another team. Juan is specific about what doesn't count:

"If it is technically self-service, but it requires fetching some building blocks from five different places and putting them together and then triggering a flow somewhere else, then it's not really self-service."

2. API-based interfaces. Everything exposed through well-defined APIs with schema validation. Agents are good at calling structured APIs and discovering what's available. CLIs, MCP servers, or other wrappers on top are fine, but the API is the foundation. Schema validation means agents naturally construct valid requests.

3. Local-first. Since agents typically run on the developer's machine, make it possible to validate everything locally. Don't force an agent to push to version control and wait for a remote CI pipeline to fail minutes later. Local validation means tight iteration loops.

4. Documentation. Two strategies depending on scale -- docs next to the code for smaller repos, centralized docs for platform-wide concerns. Serve documentation via API so agents get structured content rather than parsing HTML. Use agent-specific files like agents.md or claude.md to describe build, test, deploy, and verification conventions.

5. Encourage contributions. Agents lower the barrier to contributing to platform code, so platform teams should expect more pull requests from product teams. But -- and Herreros Elorza emphasizes this -- the platform team still owns maintenance. Combine hard guardrails (security policies, compliance checks) with soft guidance (instruction files that steer agent behavior toward good patterns).

6. Measure outcomes. Use metrics to verify that platform changes actually helped. He references DORA metrics for delivery performance, reliability metrics for operational health, and platform-specific metrics like support request volume as a proxy for self-service effectiveness.

Rethinking Observability for Agents

One point Juan singles out deserves its own attention. Humans verify deployments by looking at dashboards -- graphs, charts, color-coded status panels. Agents can't do that.

"You also need to think: how does observability look like if the prime user is going to be an AI agent?"

Logs, metrics, and traces need to be available through APIs, CLIs, or MCP servers so agents can programmatically verify that their work succeeded. This is a subtle but important shift: observability systems were built for human visual consumption. Making them machine-readable is a prerequisite for agents that can autonomously deploy, verify, and iterate.

Use the Momentum

Juan's closing point is practical. AI agents don't require a new set of platform engineering principles -- they make the existing ones non-negotiable. And right now, there's organizational willpower to fund the work.

"Take advantage. Everyone from the executive level to the individual contributors are looking at AI now. It is a very hot topic. So you can use AI as the excuse to implement some best practices that, again, were always best practices if you didn't have the chance to do it until now."

Juan Herreros Elorza spoke at AI Engineer Europe 2026. Team Lead, Cloud Native Technology at Banking Circle.